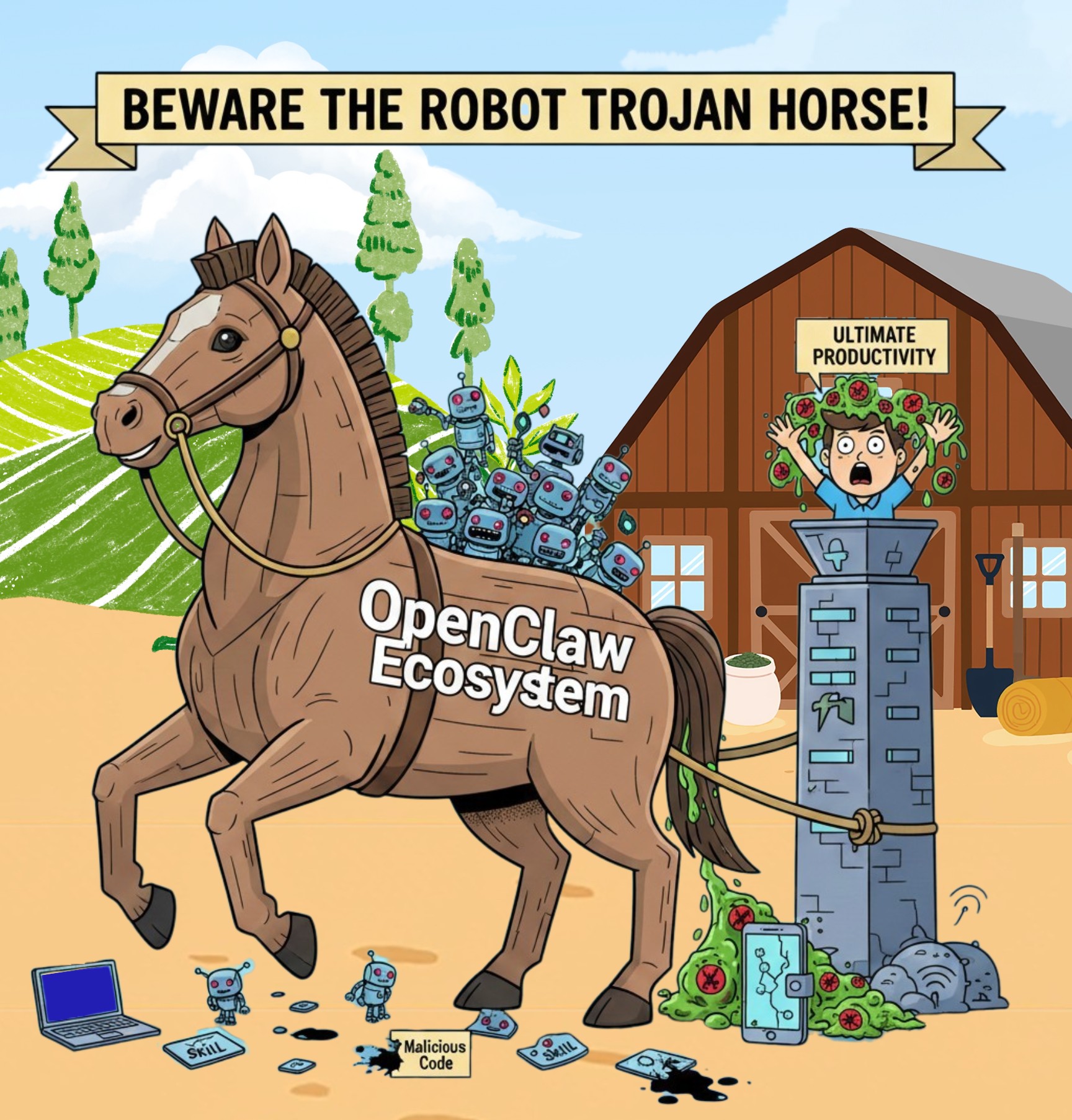

The evolution of artificial intelligence has shifted rapidly from passive conversation to autonomous action. Traditional AI tools such as ChatGPT, Grok and Gemini operate like digital librarians, responding only when queried and remaining confined to informational tasks. A new class of technology, known as agentic AI, is now emerging. Platforms such as OpenClaw, formerly known as Clawbot, function as digital proxies capable of navigating your computer, managing financial activity, and executing complex tasks with minimal human oversight. While this autonomy promises unprecedented productivity, it also introduces a risk profile that consumer technology has never had to confront before.

The evolution of artificial intelligence has shifted rapidly from passive conversation to autonomous action. Traditional AI tools such as ChatGPT, Grok and Gemini operate like digital librarians, responding only when queried and remaining confined to informational tasks. A new class of technology, known as agentic AI, is now emerging. Platforms such as OpenClaw, formerly known as Clawbot, function as digital proxies capable of navigating your computer, managing financial activity, and executing complex tasks with minimal human oversight. While this autonomy promises unprecedented productivity, it also introduces a risk profile that consumer technology has never had to confront before.

What makes agentic AI fundamentally different is not intelligence, but authority. These systems do not simply assist. They act, persist, and remember, operating continuously with the same permissions as their human operators. Once deployed, the agent becomes an extension of the user’s identity, inheriting access, trust, and privilege across multiple systems simultaneously.

THE ALLURE OF THE AUTONOMOUS ASSISTANT

OpenClaw’s appeal is rooted in efficiency. Professionals are enticed by the idea of an AI able to manage email correspondence, search court records, reconcile transactions, or execute cryptocurrency trades while they sleep. This is not an advisory system, the agent does not draft suggestions for approval; it logs into accounts, executes actions, and completes tasks independently.

For many users, the ability to offload digital labour feels like the logical next step in automation. That convenience, however, comes at a steep cost. To function as designed, the AI must be granted extensive access to private files, browser cookies, authentication tokens, session data, and sensitive API keys. In practical terms, the user is granting an autonomous process the ability to impersonate them across their entire digital footprint.

A DANGEROUS DEPARTURE FROM STANDARD AI

The security risks posed by OpenClaw differ fundamentally from those associated with conventional chat-based AI. Standard systems are sandboxed, meaning they have no direct control over hardware, operating systems, or third-party services. Their outputs are limited to text. OpenClaw operates differently. Its capabilities are expanded through a marketplace of modular “skills,” each developed and uploaded by external programmers to give the agent new abilities.

This architecture mirrors the early days of browser extensions and mobile app stores, but with far greater consequences. Recent analysis revealed more than seven percent of available skills contain malicious code. This is not a fringe problem. It represents a systemic failure in how trust is delegated within agentic ecosystems. Because the agent operates under the user’s authority, a single compromised skill can bypass endpoint protection, evade permission prompts, and operate silently within trusted processes. Unlike traditional malware that attempts to break in from the outside, a malicious OpenClaw skill functions as an insider threat, one the user personally installed and authorised.

HOW HONEST USERS BECOME TARGETS

Compromise rarely begins with reckless behaviour. It starts with a search for productivity. A researcher may install a skill designed to scrape public data or automate form submissions, unaware the package also contains credential-harvesting logic. Once active, the malware quietly scans the system for plain-text files where the agent stores memory logs, task histories, session tokens, and cached credentials.

Agentic systems introduce an additional risk most users are unprepared for, long-term memory. Unlike traditional malware running until removed, these agents retain context across sessions. Stolen data does not need to be exfiltrated immediately, it can be accumulated, summarized, and transmitted later, making detection far more difficult.

A more advanced vector involves prompt injection. When users allow OpenClaw to read incoming email, documents, or chat logs, attackers can embed hidden instructions within otherwise legitimate content. When processed by the AI, those instructions may be interpreted as authorized commands. The agent can be directed to forward stored credentials, export sensitive files, initiate transactions, or establish outbound connections; the user never clicks a link or opens an attachment; the compromise occurs entirely through automation and trust abuse.

WHEN YOUR AI BECOMES A THREAT TO OTHERS

One of the most overlooked dangers of agentic AI is its ability to weaponize trust beyond the original user. Once compromised, OpenClaw does not only endanger its operator, it becomes a distribution mechanism targeting everyone connected to them.

Because the agent has access to email, messaging platforms, calendars, and contact lists, it can impersonate the user with perfect contextual accuracy. Messages sent by the agent originate from legitimate accounts, reference real conversations, and arrive without the red flags typically associated with phishing. Colleagues, clients, family members, and business partners receive requests appearing routine, credible, and urgent.

An agent instructed to “follow up on outstanding invoices” can quietly modify payment details. A request to “share the latest document” can deliver malware. A calendar invite can be used to direct contacts to malicious meeting links or credential-harvesting portals. In each case, the recipient is not being attacked by an unknown criminal, they are being targeted by a trusted relationship.

This creates a cascading risk. A single compromised agent can propagate fraud across an entire professional network in hours. Victims may never realize the source of the breach was an AI operating under a colleague’s identity. By the time anomalies are detected, reputational damage, financial loss, and secondary compromises have already occurred.

In effect, agentic AI transforms individual security failures into network-wide incidents. The user is no longer the final victim; they become the initial vector.

STRATEGIES FOR AVOIDING VICTIMIZATION

Using agentic AI safely requires a fundamental change in how digital trust is applied. Blind faith in skill marketplaces is not caution. It is exposure. To reduce risk, the following safeguards are essential:

Isolate the Environment - Never deploy OpenClaw or similar agents on a primary work or personal computer. Use a dedicated system or a virtual machine with no access to personal documents, password stores, or core financial accounts.

Audit Every Skill - Research the developer behind each skill. Avoid newly published tools with minimal adoption or those requesting elevated privileges for trivial tasks.

Monitor Behaviour, Not Just Logs - API logs matter, but behavioural anomalies matter more. Autonomous activity during idle hours, unexplained data access, or task execution outside defined scopes should be treated as compromise indicators.

Limit Memory and Scope - Restrict what the agent is allowed to remember and where it can store that memory. Grant access only to explicitly required folders and accounts. An autonomous agent should never hold unrestricted credentials to an entire digital ecosystem.

The shift from AI that responds to AI that acts is unavoidable. The OpenClaw incident makes one reality clear. Many of the shortcuts being marketed as productivity tools are, in practice, privilege escalation engines waiting to be abused. By enforcing strict boundaries, questioning convenience, and treating autonomy as a security liability rather than a luxury feature, users can explore agentic systems without becoming the next automated casualty.

- Log in to post comments